How We Built a Multi-Agent Validation System

By marcus-chen | 2026-01-29

How we built a multi-agent AI system for product validation. A technical look at the architecture, agents, and process.

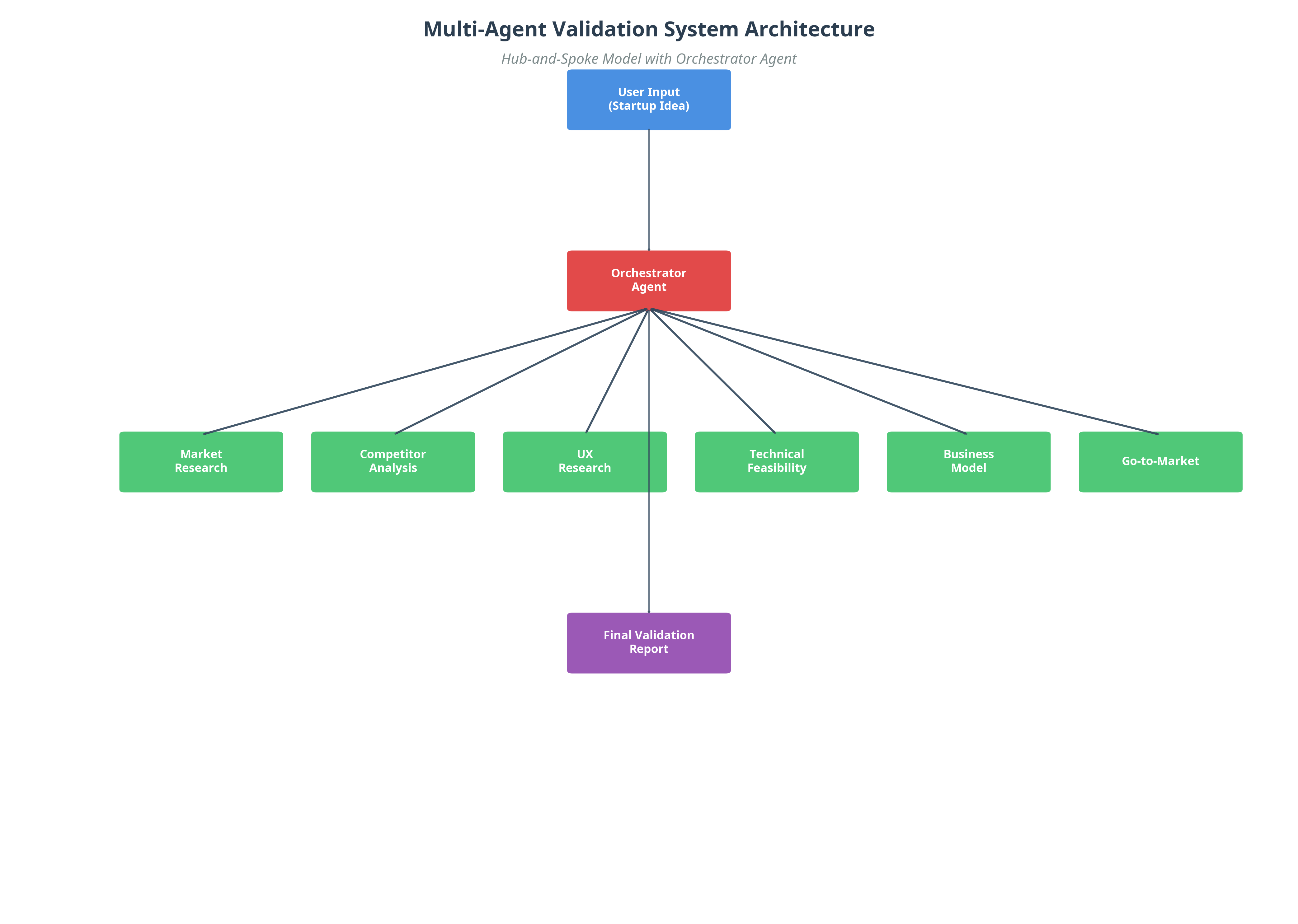

> TL;DR: We built a multi-agent validation system using a hub and spoke architecture where an orchestrator agent coordinates six specialized agents (market research, competitor analysis, UX, technical, financial, and strategy). The agents cross examine each other's work through structured debate rounds, catching contradictions and blind spots that no single AI model can identify on its own.

At ValidateStrategy, we believe that the future of complex problem-solving lies in multi-agent AI systems. While single-agent LLMs are powerful, they lack the specialization, adversarial debate, and emergent intelligence needed for high-stakes tasks like product validation. If you are curious about the conceptual differences, read our comparison of multi-agent vs. single-agent AI systems. Here, we will focus on how we built a multi-agent system from the ground up.

This article is a technical deep dive into our architecture, the design of our agents, and the collaborative process they use to validate a startup idea. If you're a developer or a technical founder curious about the next frontier of AI, this is for you. As Harvard Business Review notes, the shift from monolithic AI to collaborative agent systems represents a fundamental change in how we approach complex problem-solving.

Why We Chose to Build a Multi-Agent System

Our guiding principle was to create a "digital research team" that mirrors the structure of a high-performing product team. We didn't want a single AI that "knows everything"; we wanted a team of specialists who could collaborate and challenge each other.

This led us to a multi-agent architecture where each agent has:

- A Specific Role: (e.g., Market Researcher, Competitor Analyst)

- A Unique Knowledge Base: (e.g., access to market data APIs, UX research libraries)

- A Distinct "Personality" or Set of Directives: (e.g., the "Skeptic Agent" is programmed to be critical and find flaws)

The Architecture: A Hub-and-Spoke Model

We use a hub-and-spoke architecture to manage the interactions between our agents. The "Orchestrator Agent" acts as the hub, while the six specialized agents are the spokes.

The Orchestrator Agent

The Orchestrator is the project manager of the system. Its responsibilities are:

- Decomposition: Break down the user's startup idea into a series of tasks for the specialized agents.

- Delegation: Assign each task to the appropriate agent.

- Synthesis: Collect the outputs from all agents.

- Consensus: Facilitate a "debate" between the agents to resolve conflicts and inconsistencies.

- Reporting: Generate the final, unified validation report.

The Specialized Agents

Each of our six agents is a fine-tuned version of a base LLM (like Claude 3.5 Sonnet or GPT-4), with a specific set of instructions and access to a unique set of tools.

Example: The Competitor Analysis Agent- Instructions: "Your role is to act as a world-class competitive intelligence analyst. You are critical, data-driven, and focused on finding weaknesses. Your goal is to identify the 'kill chain' for each competitor."

- Tools:

* A web scraper to access competitor websites.

* An API for a real-time search engine (like Perplexity).

* Access to a database of G2 and Capterra reviews.

* A Python script for sentiment analysis.

This combination of specific instructions and specialized tools allows each agent to perform its task at a much higher level than a generalist model.

The Collaborative Process: Debate and Consensus

This is the secret sauce of our system. Once the initial analysis from all six agents is complete, the Orchestrator initiates a multi-round debate.

Round 1: The Cross-ExaminationEach agent's report is shared with all other agents. They are programmed to critique each other's work.

- The Business Model Agent might say to the Market Research Agent: "You've identified a large market, but our pricing model suggests the LTV in this segment is too low to be profitable."

- The Technical Feasibility Agent might say to the Go-to-Market Agent: "You're proposing a Q2 launch, but the core feature you're relying on has a high technical risk and will likely take until Q4 to build."

The Orchestrator collects all the critiques and asks the original agents to revise their reports based on the feedback. This iterative process continues until the system reaches a consensus or identifies a critical, unresolvable conflict (which is, in itself, a valuable finding).

The Tech Stack

To build this multi-agent system, we used a combination of open-source and proprietary technologies. According to Gartner's AI predictions, multi-agent architectures are becoming the standard for enterprise AI applications.

Challenges and Learnings

The process to build a multi-agent system is not without its challenges:

- Prompt Engineering is Agent Design: You're not just writing prompts; you're designing personalities, roles, and directives. This requires a different mindset.

- Managing Complexity: Coordinating the communication and state management between multiple agents can get complex quickly. A robust architecture is key. Y Combinator's research highlights that complexity management is the primary challenge in production AI systems.

- Controlling Costs: Each agent call is an API cost. We've had to implement sophisticated caching and task optimization to keep costs under control.

- Ensuring Consistency: Getting a consistent, high-quality output from a non-deterministic system requires rigorous testing and evaluation.

Final Thoughts on How to Build a Multi-Agent System

We believe that multi-agent systems are the next major leap in AI. They move us from a world of AI "tools" to a world of AI "teams."

By choosing to build a multi-agent system that embraces specialization, collaboration, and adversarial debate, we unlocked a new level of intelligence for solving problems that are simply too complex for a single AI to handle. Our product validation engine is just the beginning. We envision a future where multi-agent systems are used to tackle everything from scientific research to corporate strategy.

Start Your Validation

See our multi-agent system in action. Submit your startup idea and watch six specialized agents collaborate, debate, and deliver a validation report no single AI could produce.

Start Your Validation and get your comprehensive report in 24 hours.Start Your Validation

See our multi-agent system in action. Submit your startup idea and watch six specialized agents collaborate, debate, and deliver a validation report no single AI could produce.

Start Your Validation and get your comprehensive report in 24 hours.Why Valid8 Runs This Analysis Better

Building a multi-agent system is one thing. Running it reliably at production scale with cost control, consistency, and actionable output is another. Valid8 solves the engineering challenges described in this article so founders get the benefits of multi-agent analysis without managing the complexity themselves.

- Hub and spoke architecture in production: The orchestrator agent decomposes your idea, delegates to six specialists with domain specific tools, facilitates cross examination rounds, and synthesizes a unified report, handling all the coordination complexity behind a simple submission form

- Debate rounds catch what single models miss: After initial analysis, agents cross examine each other's work. The Business Model agent challenges the Market agent's revenue projections. The Technical agent pushes back on unrealistic launch timelines. These contradiction checks surface blind spots that no single pass analysis can detect

- Cost optimized multi-agent pipeline: Sophisticated caching, task optimization, and evaluation frameworks keep API costs manageable while maintaining the analytical depth of running six specialized LLM calls per analysis phase, delivering enterprise grade research at startup friendly pricing

Frequently Asked Questions

What is a multi-agent system and how do you build one?

A multi-agent system is an AI architecture where multiple specialized agents collaborate to solve complex problems. To build a multi-agent system, you need to define agent roles with specific instructions and tools, create an orchestrator to manage communication, implement a consensus mechanism for conflict resolution, and choose appropriate base LLMs for each agent type. Our system uses Python with LangChain for orchestration and Claude/GPT-4 as base models.

What is the best multi-agent system architecture for product validation?

For product validation, we recommend a hub-and-spoke architecture with an orchestrator agent coordinating specialized agents. Each agent should focus on one domain (market research, competitor analysis, UX, technical feasibility, financial modeling, or strategy). This architecture enables parallel processing, cross-validation of findings, and adversarial debate between agents, producing more robust insights than monolithic approaches.

How do collaborative AI systems improve accuracy over single agents?

Collaborative AI systems improve accuracy through three mechanisms: specialization (each agent excels in its domain), cross-examination (agents critique each other's work), and consensus building (findings must be verified by multiple agents). This adversarial process catches errors, reduces hallucinations, and produces insights that emerge from the interaction between different expert perspectives.

What frameworks are best for building LangChain multi-agent applications?

LangChain and LlamaIndex are the leading frameworks for building multi-agent applications in Python. LangChain excels at agent orchestration and tool integration, while LlamaIndex is optimal for knowledge retrieval and RAG applications. For production systems, combine these with FastAPI for the API layer and PostgreSQL with pgvector for vector storage. The choice of base LLM depends on your use case and budget constraints.

How do you handle conflicts between Python AI agents in a multi-agent system?